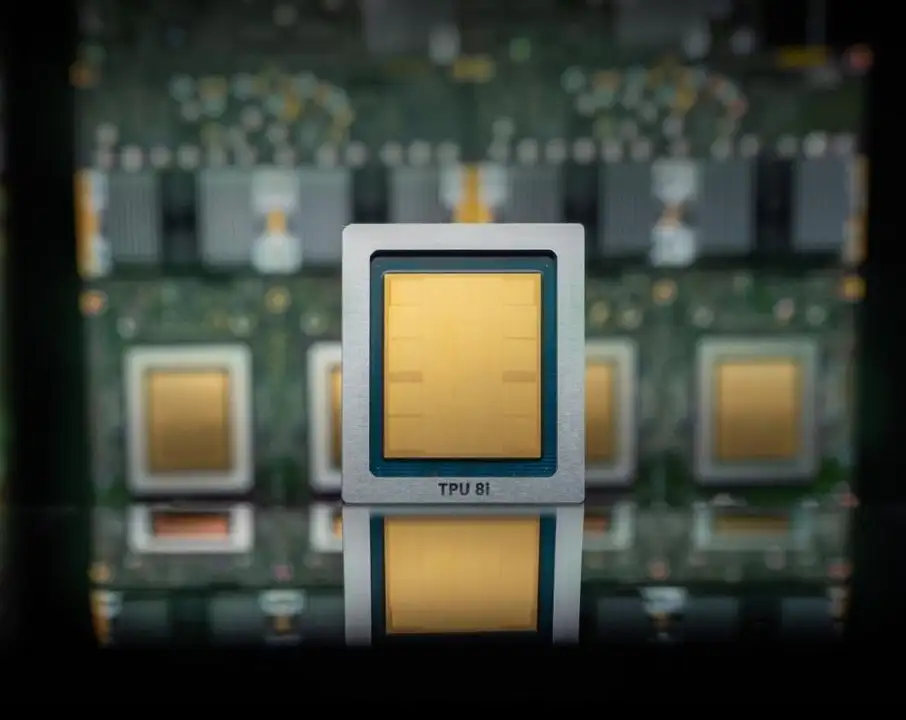

Google's eighth generation of custom AI chips splits into two specialized processors: the TPU 8t for model training and the TPU 8i for inference, the process of running models after they are built.

The company says the new chips deliver up to three times faster AI model training and offer 80 percent better performance per dollar compared to previous TPU generations. It also claims the ability to link over one million TPUs in a single cluster, aiming to provide more compute for less energy and cost.

These chips are not a replacement for Nvidia's hardware. Google states its cloud will continue to offer Nvidia-based systems, including the upcoming Vera Rubin chip later this year. The company frames its custom TPUs as a supplement within its infrastructure.

Patrick Moorhead, principal analyst at Moor Insights & Strategy:

"I had predicted that Google’s TPU could be bad news for Nvidia (and Intel) back in 2016 when the search giant launched its first one. Nvidia is now a nearly $5 trillion market cap company, meaning that prediction didn’t exactly hold up to the test of time."

Always fun to dig up this 10 year old post on TPU. “Wondering what this means to NVIDIA and Intel”.

— Patrick Moorhead (@PatrickMoorhead) April 22, 2026

Google announced the TPU at the Google I/O event I attended on May 16, 2016. TPUs were stealth in operations for a year.

Very little technical information was shared.… https://t.co/qnWWLiqcoR

Google also says it is working with Nvidia to enhance the efficiency of Nvidia-based systems in its cloud. The collaboration focuses on improving Falcon, a software-based networking technology Google created and open-sourced in 2023.

One day, the hyperscale cloud providers building their own chips may need Nvidia less as enterprise AI workloads shift to their platforms. For now, Google's growth as an AI cloud provider could still result in more business for Nvidia, even as many workloads run on Google's own silicon.

Source: Google Cloud