Large language models now participate in building the next generation of AI systems, a development that traces back to mathematician I. J. Good's 1966 speculation about machines designing superior successors. OpenAI disclosed in February that its GPT-5.3-Codex model handles training error debugging, deployment management, and evaluation analysis for its own development pipeline. Anthropic states that Claude Code now writes the majority of its internal code.

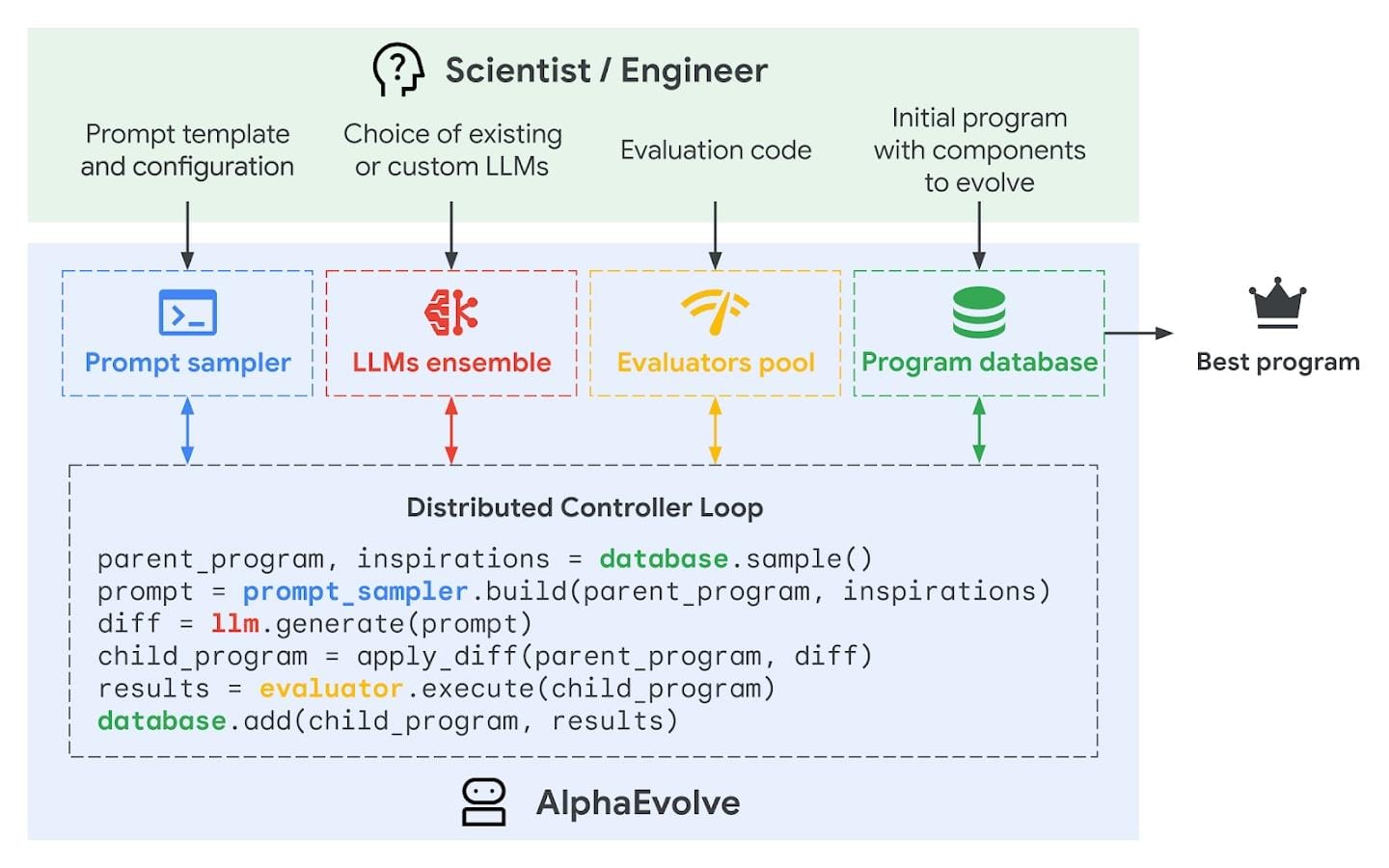

These systems replace workflows that previously required dedicated engineering hours, but they do not replace human judgment. What they replace is the manual iteration of routine code review and infrastructure scripting. What they still cannot do is define their own objectives or validate whether a deployed change serves human interests. Google DeepMind's AlphaEvolve optimizes neural network architectures and data center planning, yet humans determine which problems it solves and how performance is measured.

Matej Balog, researcher at Google DeepMind, describes the current arrangement:

"Intense collaboration has formed between humans and machines. Some discoveries the system produces give researchers entirely new perspectives. The goal is to find algorithms beyond the reach of human intuition, and concrete examples are already beginning to emerge."

Google DeepMind's chip design project illustrates the staged approach. The first phase assists human designers. The second targets automation for companies without dedicated chip teams. The third, more distant phase would have AI designing better chips to train stronger AI. Company officials emphasize that removing human oversight entirely is not planned.

Self-modifying systems push further into autonomy. The Darwin Gödel Machines project from British Columbia University and Sakana AI uses evolutionary algorithms to develop large language model-based coding agents that can alter their own code. A related system, AI Scientist, published in Nature in March, automates research generation: it produces hypotheses, runs experiments, writes papers in scientific format, and evaluates them. This extends automation beyond coding into experimental and evaluative loops.

What controls these capabilities matters as much as what they achieve. The AI Scientist system produces papers, but cannot independently verify whether its experimental designs are ethically sound or its conclusions reproducible. Darwin Gödel Machines can self-modify, but cannot assess whether a code change that improves performance also introduces instability or security vulnerabilities.

Researchers characterize current models as "adequate" at idea generation, implementation, and evaluation, not expert-level. Physical world processes remain a major barrier to full autonomy. Most experts argue humans will stay central to these systems.

Some specialists disagree. An uncontrolled AI development process remains possible, and the "intelligence explosion" scenario stays on the table. Company-internal self-improving models that never see public release pose particular risks. Researchers call for strict oversight of advanced AI laboratories, with close monitoring of potential misuse in cyberattacks or biological weapons development.

The future ecosystem may not center on a single superintelligence, but on numerous interacting AI agents. Who controls the evaluation criteria, the deployment decisions, and the feedback loops between them becomes the critical question. Source: spectrum