Gemma 4's open models now outperform competitors twenty times their size on local hardware, delivering frontier-level reasoning without cloud dependency.

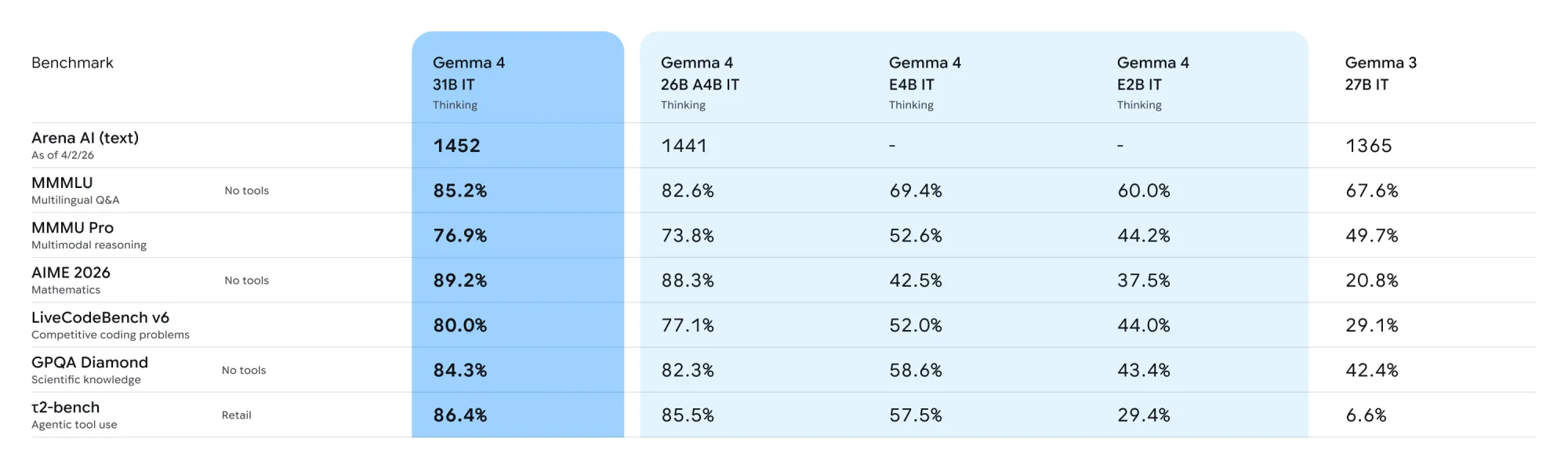

The family arrives in four sizes: Effective 2B, Effective 4B, 26B Mixture of Experts, and 31B Dense. The 31B model currently ranks as the #3 open model globally on Arena AI's text leaderboard, while the 26B variant holds the #6 position. Clément Delangue, co-founder and CEO of Hugging Face:

"The release of Gemma 4 under an Apache 2.0 license is a huge milestone. We are incredibly excited to support the Gemma 4 family on Hugging Face on day one."

All models process video, images, and text natively. The edge-focused E2B and E4B variants add native audio input for speech recognition while running completely offline on phones, Raspberry Pi, and NVIDIA Jetson devices. Context windows span 128K tokens for edge models and 256K for larger variants, enabling full repository or document analysis in a single prompt.

Developers can fine-tune Gemma 4 on consumer GPUs to achieve task-specific performance. Early adopters include INSAIT, which built a Bulgarian-first language model, and Yale University, which applied the models to cancer therapy pathway discovery.

The Apache 2.0 license removes deployment restrictions, granting full control over data and infrastructure. Enterprises can run Gemma 4 on-premises, in sovereign clouds, or across hybrid environments without licensing barriers.

Practical stakes rise with each capability: agentic workflows with function-calling and structured JSON output let autonomous systems interact with external APIs, while high-quality offline code generation turns local workstations into private AI assistants. The same models that power research also process sensitive data without leaving secure environments.

Gemma 4 supports over 140 languages natively and integrates with tools including Hugging Face Transformers, vLLM, llama.cpp, Ollama, and NVIDIA NIM. Android developers can prototype agentic flows today via the AICore Developer Preview.

Whether the intelligence-per-parameter gains translate to broader real-world adoption depends on how quickly the developer ecosystem builds on the new foundation.

For now, the models are available for download on Hugging Face, Kaggle, and Ollama.