Compilers have always held a special place in computer science. Not because they're the flashiest technology, not because they make headlines — but because building one forces you to confront how software actually works at every level.

Language design, architecture, hardware, the translation of human intent into precise machine behavior. For decades, writing a compiler was the rite of passage. The thing that separated engineers who understood what was really happening from those who didn't.

Now a machine is attempting that same passage.

Why a Compiler? Why Does This Matter?

Chris Lattner — the person who built LLVM, created Clang, and founded Modular, watched Anthropic's Claude C Compiler project closely. His verdict was straightforward: this is real progress.

Compilers don't tolerate mistakes. A single incorrect transformation can silently break programs for thousands of users without a single error message. Every layer has to maintain strict rules while working in harmony with every other layer. And unlike most software, the success criterion is brutally clear: it either works or it doesn't.

That's exactly what makes it such a revealing benchmark for AI. Earlier AI coding tools were impressive at local tasks — writing a function, completing a snippet, filling in boilerplate.

Those test pattern recognition over short distances. The Claude C Compiler operates at a different level entirely. It maintained coherence across an entire engineering system, coordinated multiple subsystems, preserved architectural structure, and iterated toward correctness through a feedback loop of tests and failures.

This is AI moving from code completion to engineering participation. That's not a small distinction.

What's Actually Inside CCC

Anthropic didn't just publish a polished result or a benchmark score. They released the full source history, design documents, and future plans — everything open for inspection. Lattner spent time doing exactly that.

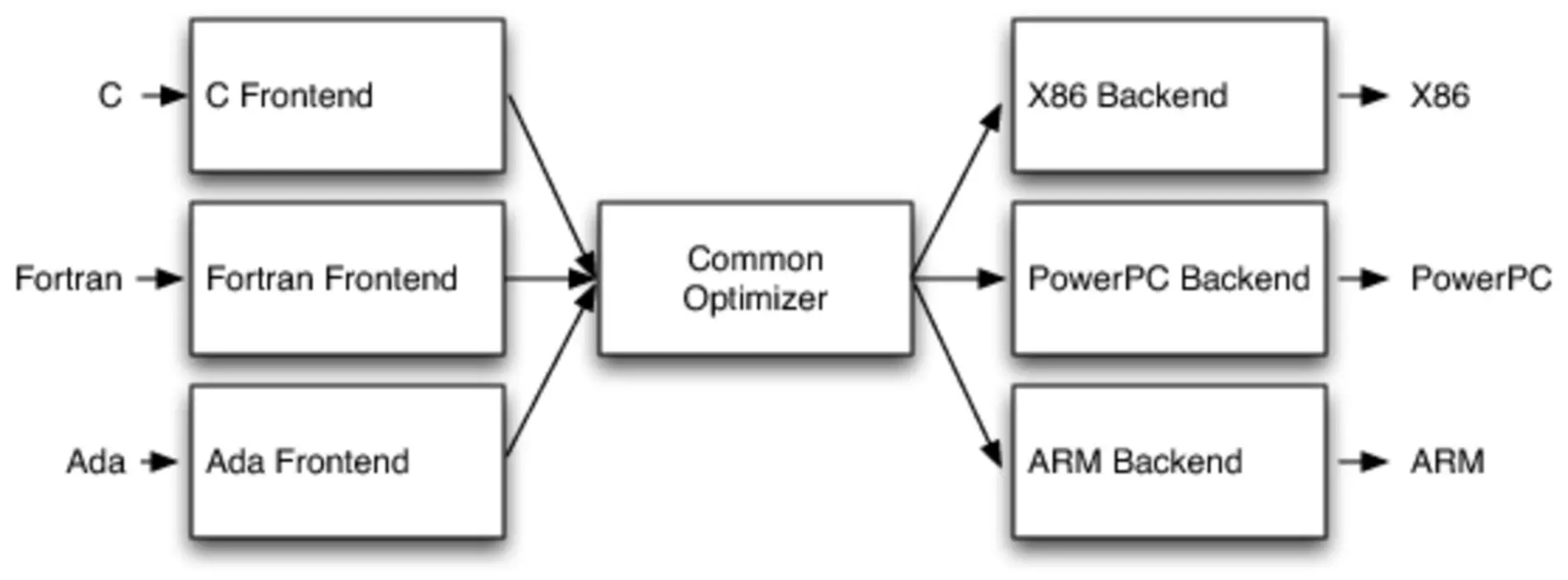

The first major commit essentially one-shots the entire architecture. From the very beginning, CCC follows a classic compiler structure:

- Frontend: Preprocessor, parser, and semantic analysis

- Intermediate representation and optimizations: A design directly inspired by LLVM

- Backend: Code generation for four architectures — x86-32, x86-64, RISC-V, and AArch64

The LLVM fingerprints are everywhere. Instructions like GetElementPtr, basic block terminators, the Mem2Reg pass — any LLVM developer would recognize these immediately. Lattner doesn't frame this as a problem: "I learned from GCC when I built Clang," he says. The criticism that CCC learned from prior art strikes him as ridiculous.

What emerges from the repository looks less like a research experiment and more like a competent textbook implementation — the kind a strong undergraduate team might produce early in a project, before years of hardening and production experience.

Where CCC Gets It Wrong

The mistakes are the most instructive part.

Several design choices suggest the system optimized for passing tests rather than building durable, general abstractions:

- The code generator re-parses assembly text instead of using a proper intermediate representation. This is a meaningful architectural weakness, not a minor rough edge.

- The parser has weak error recovery and handles certain corner cases incorrectly.

- Rather than genuinely parsing system header files — which are far messier than application code — CCC hard-codes what it needs for its tests directly into the source.

That last issue is the most telling. It means CCC can't generalize meaningfully beyond the test suite it was built against. The bug tracker confirms this. Current AI systems are genuinely excellent at assembling known techniques and iterating toward measurable success criteria. Where they struggle is the open-ended generalization that production-quality systems demand.

What This Actually Tells Us About AI Coding

The deepest lesson from CCC isn't that AI can build a compiler. It's how it built one.

CCC didn't invent a new architecture. It didn't explore unfamiliar design territory. It reproduced something strikingly close to the accumulated consensus of decades of compiler engineering — structurally correct, familiar, grounded in well-understood techniques. Trained on GCC, LLVM, and decades of academic literature, the result naturally reflects that lineage.

This aligns closely with Richard Sutton's Bitter Lesson: scalable methods rediscover broadly successful structures. Modern language models are extraordinarily powerful distribution followers. They learn what has been widely written and reinforced, then generate solutions near the center of that collective experience.

Lattner draws a line that's worth sitting with:

Implementing known abstractions is not the same as inventing new ones.

Historical progress in compilers didn't come from assembling standard components quickly. It came from conceptual leaps, new intermediate representations, new optimization models, new ways of thinking about how programs and hardware interact. None of that appears in CCC. The system is impressive in scope, but there's nothing genuinely novel in what it produced.

AI coding, then, is best understood as automation of implementation. It dramatically lowers the cost of building known things. As that cost falls, the scarce resource shifts upward, toward deciding what systems should exist and how software should evolve.

Intellectual Property and the Legal Lag

CCC also surfaces a question the industry has been quietly avoiding: when an AI system trained on decades of open-source code reproduces familiar structures, patterns, and specific implementations — where exactly is the line between learning and copying?

Observers have pointed to cases where CCC outputs bear a striking resemblance to existing implementations, including standard headers and utility code, despite Anthropic's claims of clean-room development. Current legal frameworks were built for humans who reference source material explicitly. They don't map cleanly onto systems that learn statistically from vast bodies of prior work.

Lattner acknowledges the tension but doesn't think it kills proprietary software as a concept. AI lowers the cost of reproducing established designs, which shifts competitive advantage away from isolated codebases and toward execution, ecosystems, and continuous innovation.

The legal and institutional norms will have to evolve — much like they did when Linux and open source first disrupted established assumptions about software ownership.

When Cost Drops, Ambition Expands

History has a consistent pattern here. When the cost of building something drops dramatically, we don't just build the same things more cheaply — we build entirely different things.

Compilers proved this themselves. When programmers no longer had to write assembly by hand, they didn't become less ambitious. They became vastly more ambitious, and entire industries emerged from that unlocked ambition.

The same dynamic applies now. As writing code gets easier, the likely outcome isn't fewer programmers. It's more software, more experimentation, more specialized tools, and solutions to problems that weren't previously worth automating.

What actually changes is the economics of specific kinds of engineering work. Rewrites, migrations, boilerplate implementations — necessary tasks, rarely innovative ones.

AI systems are unusually well-suited to exactly this category. Engineers stop typing implementations and start directing systems: specifying intent, validating outcomes, shaping architecture.

The limiting factor shifts. It's no longer whether software can be built. It's deciding what should be built and managing the complexity that follows.

What Happens to the Engineers

Every major shift in software development has redefined what it means to be a programmer. Early engineers managed hardware directly. Later generations learned to trust compilers and higher-level languages. Each transition removed manual work and raised the bar for what engineers were expected to accomplish.

AI coding is the next step in that same progression. The most effective engineers won't compete with AI at producing code. They'll collaborate with it, using it to explore ideas faster, iterate more broadly, and concentrate human effort on direction and design.

The data already shows the divergence. According to CircleCI's 2026 State of Software Delivery Report, the top 5% of engineering teams nearly doubled their output year-over-year while the bottom half stagnated. The most productive team in 2025 delivered roughly ten times the throughput of 2024's leader. The gap is measurable, and it's widening fast.

How Modular Is Actually Responding

Lattner translates all of this into three concrete expectations for his team — not as abstract philosophy but as operational reality.

First: adopt aggressively, stay accountable. Every employee across every function is expected to actively use AI tools to accelerate their work. But this doesn't transfer responsibility to the tool. Work produced with AI should be understood, validated, and owned just as deeply as anything written by hand. Reputation is built on outcomes, not on prompts.

Second: move human effort up the stack. A large fraction of historical engineering work has gone into mechanical tasks — rewrites, interface adaptations, system migrations. AI is rapidly becoming better at these than humans. The answer isn't to compete with that. Engineers should be directing where systems go next, not re-implementing what already exists.

Third: invest in structure and community. AI amplifies structure — both good and bad. Well-documented systems become dramatically easier to evolve. Poorly structured systems scale into confusion faster than ever. Documentation, clean interfaces, and explicit design intent are no longer optional overhead. They're operational leverage. And as implementation costs approach zero, aligning people around shared goals becomes the real constraint.

The Claude C Compiler is not a product. It's a signal — and reading it correctly may determine which engineering teams pull ahead over the next five years and which ones quietly fall behind.

The fact that AI can now build something as demanding as a compiler puts the old question. Can AI write code? — firmly to rest. The question that actually matters now is: which engineering decisions still belong to humans?

Lattner's analysis points to a clear answer. Applying known patterns is increasingly the machine's territory. Defining new abstractions, imagining what systems don't yet exist but should, and ensuring those systems remain comprehensible to human beings over time. That's where human judgment holds its ground.

There's a practical implication here that most teams haven't fully internalized yet. Good architecture documentation is no longer just professional hygiene. In an environment where AI systems amplify whatever structure they're given, a well-documented codebase is a force multiplier. An undocumented one is a liability that compounds.

The engineers who thrive in this environment won't be the fastest typists or even the most technically fluent. They'll be the ones who can look at a problem space and ask the right question before anyone else does: is this worth building, and if so, what should it actually be? Code has always been a tool. In the age of AI, vision becomes the scarce resource — and the most expensive one.