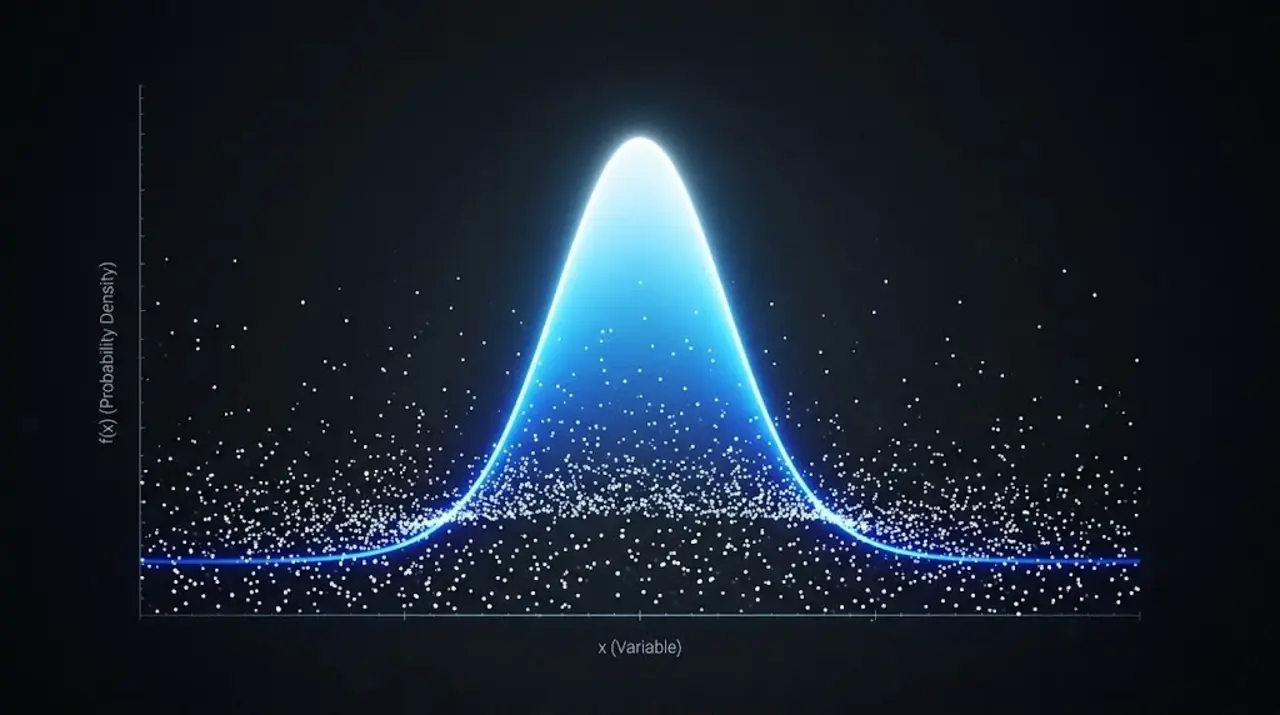

Flip a coin a million times and the chaos of each toss dissolves into the perfect symmetry of a bell curve—mathematics turning randomness into reliable structure.

The central limit theorem (CLT) states that the average of many independent random samples will follow a normal (bell-curve) distribution, regardless of the original distribution. Daniela Witten, a biostatistician at the University of Washington, described it as "pretty amazing because it is so unintuitive and surprising."

Historical roots trace back to 1733, when Abraham de Moivre first outlined the normal distribution while advising gamblers. Pierre-Simon Laplace generalized the result in 1810. De Moivre calculated that the chance of 45–55 heads in 100 fair coin flips is about 68 %, an early demonstration of how averages stabilize into predictable shapes.

Modern statistics relies on this convergence. Larry Wasserman, a statistician at Carnegie Mellon University, observed that "I don’t think the field of statistics would exist without the central limit theorem." By enabling scientists to estimate population parameters from sample means, CLT underlies most inferential tools used across the sciences.

The theorem demands two conditions: large sample sizes and independence. When samples are small or observations clustered, the distribution of the mean may stay skewed or heavy-tailed, and the bell curve never appears. Richard D. De Veaux of Williams College noted that "these days, modeling extreme events is probably as important as modeling the mean," highlighting situations where averages tell only part of the story.

Despite its reach, CLT offers no protection against rare, high-impact deviations. The chance of observing 20 or fewer heads in 100 fair flips remains about 0.15 %, illustrating how tail behavior can still surprise even when the bulk of the distribution behaves.

Source: Quantamagazine